Smart city and surveillance measures : a conversation with Myrtille Picaud and Régis Chatellier

The massive spread of digital technologies in urban areas has caused new issues to emerge regarding the use of data. Based on new economic models, the use of data collected in urban areas, whether they be private or public, raises many political and ethical issues. Facial recognition is a growing source of concern, motivated by fears of a smart city with authoritarian tendencies and overzealous security.

On 10 September 2019, La Fabrique de la Cité invited Régis Chatellier, a foresight project manager at the CNIL, and Myrtille Picaud, post-PhD researcher within the “Cities and tech” chair of the École urbaine de Sciences Po, to discuss whether the smart city is compatible with democratic principles.

La Fabrique de la Cité : Does the spread of new technologies in urban areas have a direct effect on individual behaviors?

Régis Chatellier: Before discussing the city, I think it is important to underline the fact that surveillance, made possible by technology, has been having effects on individuals for a long time. In 2016, the University of Oxford thus published a study that showed decreased visits to terrorism-related Wikipedia pages in the aftermath of the Snowden case, as individuals have feared, since then, that states will monitor their online activity. More specifically, individuals fear that an algorithm will misunderstand their interest in subjects related to terrorism. There is even an expression that describes a change in online behavior driven by the fear of being accused of reprehensible intentions: it is called “the chilling effect”.

Myrtille Picaud: In the city, the increasingly massive collection of data affects the very definition of the norm, even though it does not necessarily change individual behaviors. It thus prescribes what would be considered as an appropriate use of public space from a collective standpoint. The question of smart lighting in the city is, I think, a good illustration. Putting sensors on streetlamps so that they light up precisely when someone passes by suggests that the street is mainly a place of passage. A technology whose main goal is energy-saving, or potentially safety, thus participates in defining desirable futures for the city and its public spaces and seeks to actively guide behaviors. Public space at night is considered more as a point of passage than a place to spend time in. Therefore, the use of technological devices translates the way spaces are conceived and helps shape public spaces appropriation.

« In the city, the increasingly massive collection of data affects the very definition of the norm. »

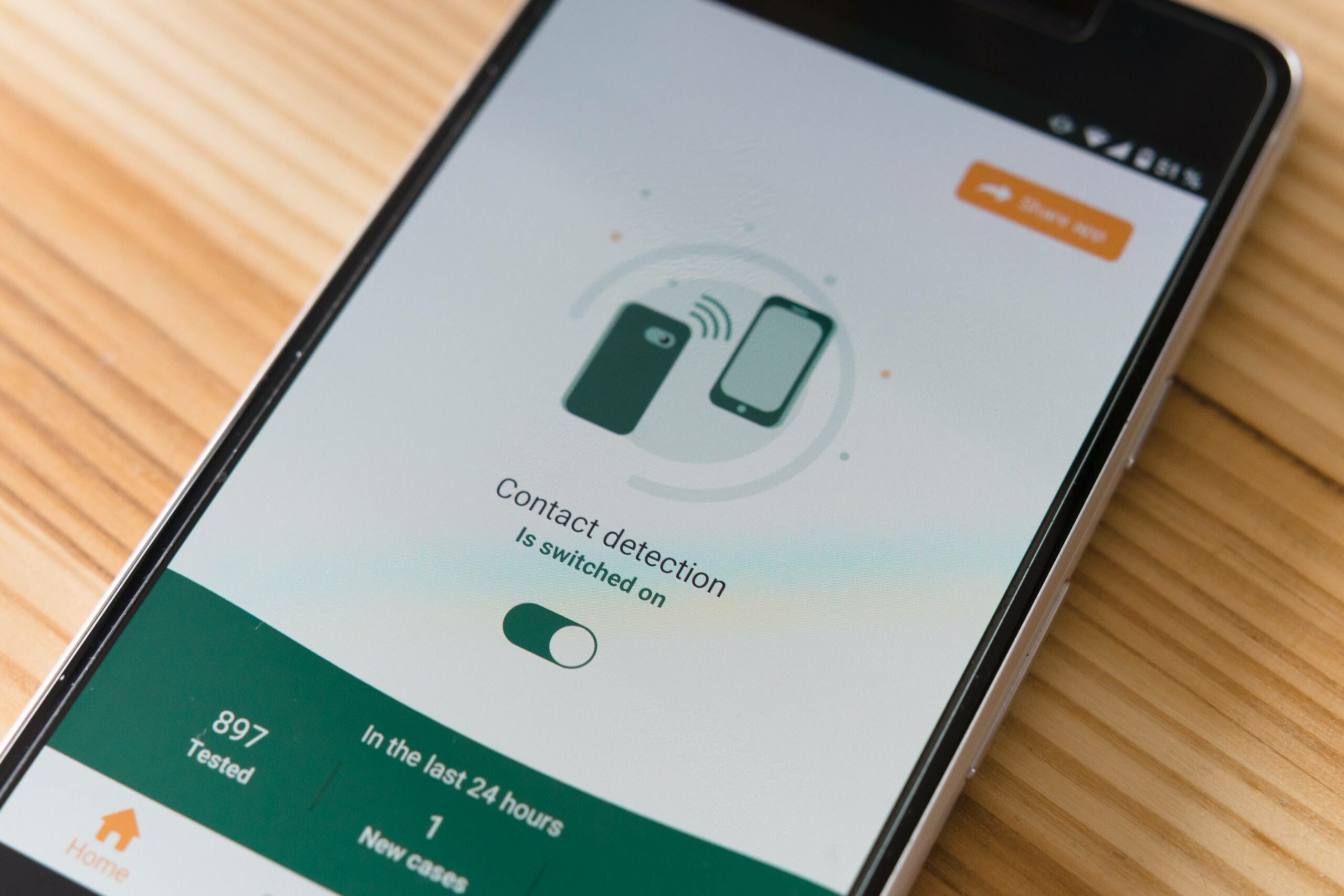

LFDLC : In a context where our mobile phones can constantly geolocate us and video-surveillance devices are massively deployed in cities, is it still possible to remain anonymous in the public space?

Régis Chatellier : In October 2017, the CNIL published an “Innovation and foresight” study entitled La plateforme d’une ville (A city’s platform), in which we questioned whether it is possible to imagine a “private navigation” mode in the public space, similar to private browsing modes on the Internet. Even though facial recognition was not a key issue at that time, we knew that with the increase of data collected in the city, our comings and goings could potentially be followed using to geolocation. Besides, it is worth recalling that the first sensor is the one we all have in our pocket: with the smartphone, we have outfitted ourselves with an infrastructure that enables our surveillance. In our study, we highlighted the fact that the concept of anonymity in the public space should be at the heart of the debate, especially as said anonymity is gradually vanishing. Today, with facial recognition in the public space, a milestone is passed as it is not only a matter of collecting relatively classic personal data, like the kind that can be obtained through geolocation for instance. Facial recognition enables the extraction of biometric data, which is sensitive personal data because it is linked to an individual’s physical characteristics. To recognize an individual, the system must indeed save a biometric format, which is created from a geometrical shape based on parts of the face. From that file, in order to carry out facial recognition in the public space, the system must establish similarities and a “match” in a potentially very large database that could, eventually, contain the entire population. This therefore implies several risks, beginning with cybersecurity, as this database could one day be hacked: information could be leaked, all the more easily if this database is centralized.

« It is worth recalling that the first sensor is the one we all have in our pocket: with the smartphone, we have outfitted ourselves with an infrastructure that enables our surveillance. »

LFDLC : Are recognition technologies, which are currently at the experimental stage under real conditions, reliable?

Myrtille Picaud: Beyond biases in algorithms, which have trouble recognizing black persons and women, the reliability of facial recognition systems is still limited. The South Wales Police published some figures in 2017 that are very revealing in this regard. During certain large events, and specifically certain Champions League games, a facial recognition technology was tested and it was revealed that 93% of alerts were in fact “false positives”, which means that the algorithm recognized a wanted person in the public, but actually misidentified the person. Therefore, only 7% were “true positives”. It is quite disturbing to consider that such inefficient systems could, in the end, be used by police departments, even though they have evolved since these figures were announced. These experimentations also highlight the social banalization of the use of facial recognition in the public space, especially during festive events like games or concerts. Whether it is used for policing or commercial purposes, facial recognition raises many political issues.

Régis Chatellier: Facial recognition is indeed a probabilistic system. If we solve it by strictly using the biometric size of an individual, then the system won’t be able to identify an individual without a high-quality picture. Conversely, if the settings are too flexible, there will be countless false positives. An experiment carried out during the Notting Hill festival in London in 2017 thus produced 98% false positives[1].

LFDLC : The collection of data, especially biometric, within the public space is increasing significantly. Could this lead to favoring security over individual freedom?

Myrtille Picaud: The deployment of facial recognition systems, which is one aspect of safe city projects, raises many issues. Those issues need to be integrated within a broader question: how can one think surveillance? Foucault made a distinction between two types of devices. Briefly, the security device affects the entire population and relies on tools that define a norm, in the statistical sense. Depending on this statistical analysis, groups of people will be targeted because they are outside the norm, and we will try to bring them back into the norm. This device does not necessarily exercise direct coercion, unlike the disciplinary device, which it can be associated to. The latter, of which prison is a typical example, applies to an individual considered as deviant, and constrains his body in a specific place and time. It tries to fit the individual into a norm that is defined based on values and on what we consider as good behavior. Those norms and devices apply in power relations between social groups and therefore target certain specifically, for instance working classes, victims of racism, and so on.

In the city, we notice a certain number of devices that combine disciplinary and security aspects. For instance, the cartography of criminality is a security tool that allows for the targeting of several neighborhoods where crime rates are higher, to try to bring them back into the statistic norm. This triggers disciplinary measures because the massive deployment of police forces will cause more control and can lead to fines or even arrests. If we think about this articulation between discipline and security in the public space, it is possible to think of surveillance as a population management method and not only as a device that applies to the individual. The goal of these devices is not only to exercise a constraint on individuals; it is also to manage an entire population through data collection, by disparately targeting certain spaces and social groups. I would also add that the cartographies mentioned, which are now used to rationalize police activity (see the work of Bilel Benbouzid), are very problematic. For instance, in a neighborhood identified as having a higher crime rate than average, the increase of police control can lead to an increase of data signaling offences in this neighborhood. Their algorithmic processing can then lead to a vicious circle, causing “overtargeting” of this neighborhood and the control of some populations considered as “at risk”, which therefore reinforces the social phenomena we otherwise notice.

« The goal of these devices is not only to exercise a constraint on individuals; it is also to manage an entire population through data collection, by disparately targeting certain spaces and social groups. »

Régis Chatellier: For now, facial recognition systems cannot be used for sovereign purposes in France. This could not be achieved without an evolution in the legislation, which supervises only the use of video, excluding recognition technologies. In September 2018, faced with the development of those technologies and their possible use in the public space, the CNIL expressed the need to engage in a public debate in order to identify a legal frame that could strike a balance between security and the protection of rights. The use of facial recognition in the public space will become possible if a law or a public decree by the Conseil d’État (France’s highest administrative court) allows it. But if the major stakes are not defined clearly, we risk a hasty decision and legislation that would not be respectful enough of individual freedoms.

Myrtille Picaud: This debate is all the more urgent as several public and private stakeholders, especially from the security industry, call for authorization of facial recognition in the public space ahead of the 2024 Olympic Games. Ideally, the authorization would be announced by 2023 so that systems can be tested out during the Rugby World Cup and thus be fully operational for the Olympic Games.

LFDLC : Overall, is the smart city heading towards a city of mass surveillance?

Régis Chatellier: One of the biggest dreams of the smart city, expressed in the 1960s although the term did not exist at that time, was to monitor the whole city. This seems possible in states where private stakeholders have no choice but to cooperate with the public sector, such as China. Indeed, a system of highly-developed social control is conceivable in China because the private sector is at the service of the state. In more democratic regimes, the multiplicity of stakeholders ensures that no single stakeholder can exert widespread surveillance.

Myrtille Picaud: As mentioned it earlier, one of the risks with always developing more security devices in the public space has to do with socio-spatial inequalities, which comes with stigmatization of working-class neighborhoods and of certain social groups, over which control increases first. In addition, the banalization of these security devices goes hand in hand with the development of a culture of anticipation, prediction, or “alertness”, in the words of Emmanuel Macron, that transforms our representations and customs.

LFDLC : Are there large movements that oppose the possible end of privacy in the public space?

Régis Chatellier : There are no such large protest movements, although there is an active nonprofit sector, stirred by entities like Privacy International or the Quadrature du Net in France. We could draw a parallel with the environmental issue: The subject can sometimes seem too distant for individuals to really feel concerned about it. They know there is a risk, but they are only aware of the amplitude of it once they are personally exposed to it. Regarding the data issue, the same logic applies: unless we are identified by mistake by a facial recognition tool, or submitted to algorithmic bias, we are not fully aware of the scope of the debate. However, my remarks could be nuanced, as video surveillance is the subject of most of the complaints the CNIL receives. But those complaints are limited to individual actions, and there is no great movement yet, even though civil society has a central role to play on these issues.

Myrtille Picaud: I concur: there is currently no massive resistance movement around the facial recognition issue, even though the Quadrature du Net is now rallying on these questions. This low politicization can be explained by the plasticity of the appropriation of technologies. In her book, L’oeil sécuritaire, sociologist Élodie Lemaire explains that video-surveillance, which was initially developed for security purposes, has been subject to a variety of uses. For instance, video-surveillance in stores or public transport is at times more used for monitoring staff than it is for watching “delinquents”. Therefore, one technology is subject to distinct uses, depending on variable objectives, which implies oppositions expressed in various ways and sometimes without warning.

These other publications may also be of interest to you:

Behind the words: urban congestion

180° Turn

A street named desire

Toronto: How far can the city go?

The political and technological challenges of future mobilities

Viktor Mayer-Schönberger: what role does big data play in cities?

Thomas Madreiter: Vienna and the smart city

Vienna

Hausmann and us

La Fabrique de la Cité

La Fabrique de la Cité is a think tank dedicated to urban foresight, created by the VINCI group, its sponsor, in 2010. La Fabrique de la Cité acts as a forum where urban stakeholders, whether French or international, collaborate to bring forth new ways of building and rebuilding cities.